Machine Learning Makes Massive Black Hole Data Darker

May 3

The first-ever image of a black hole was captured by the Event Horizon Telescope team in 2019. It required a lot of observation data and processing. Researchers have now produced a finer image using even more data from a new source: models generated using machine-learning.

Beyond Bytes: Updated Unit Names Needed for Massive Digital Data

Nov 30

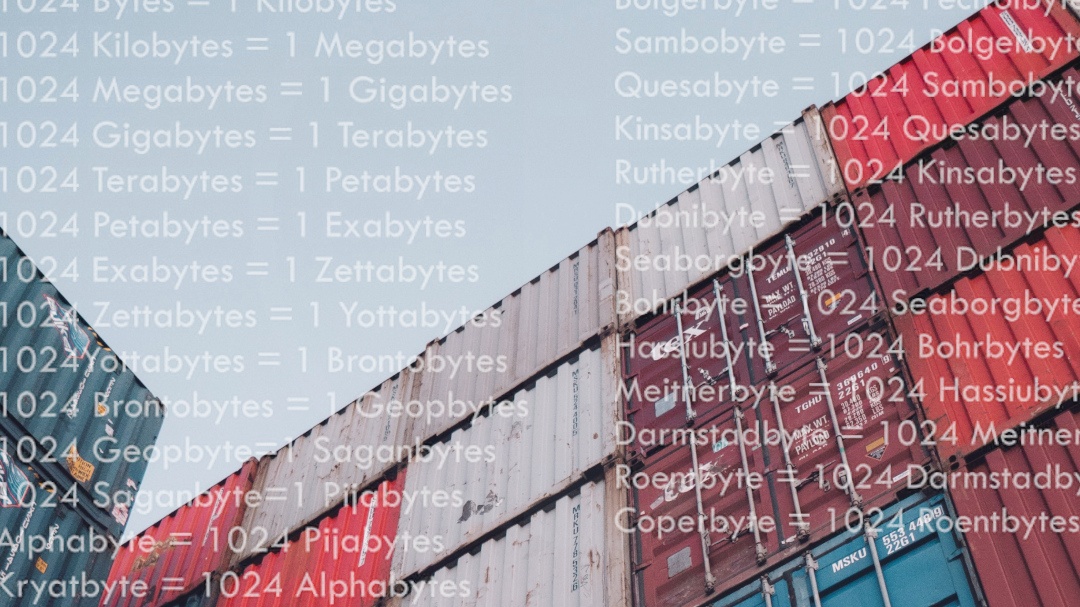

Gigabytes…Terabytes…Petabytes…Zettabytes… Naming units of computer memory follows a standard system of prefixes but after a certain range gets a bit informal. The common and even very large volumes we see in our digital landscape were covered for years. Until now. For the first time in 31 years the International System of Units (SI) has been expanded to include two more prefixes.

Behind the Big Data: Revealing Our Galaxy's Black Hole

May 18

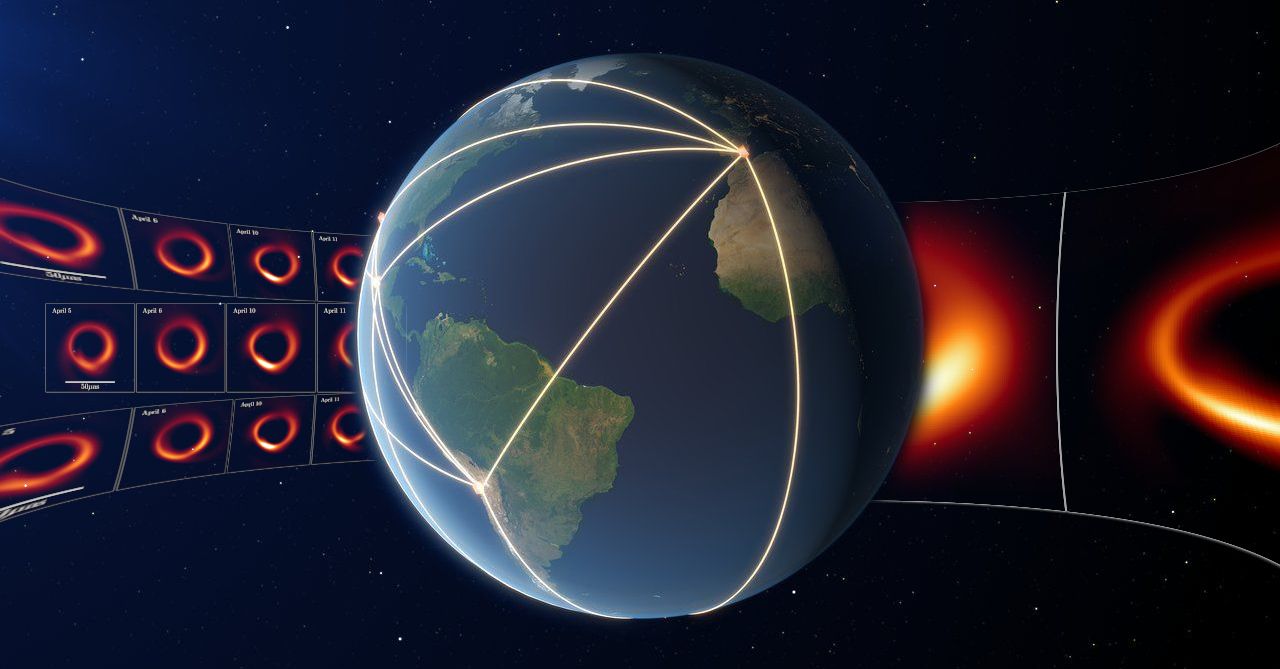

Astronomers from the Event Horizon Telescope project revealed the first-ever image of the black hole that sits at the center of our galaxy known as Sagittarius A*. It was produced using observation data collected from the network of telescopes situated around the globe. How much data must you compute to get an image of an object that’s 27,000 light-years away, is 4 millions times more massive than our Sun, from a network of telescopes that make a dish the size of the Earth?

Under the Sea - Microsoft's data center experiment resurfaces

Sep 17

This wasn’t data overboard but data submerged! After two years resting on the ocean floor off the coast of Scotland, Microsoft researchers have retrieved an underwater data center to see how it fared.

Spring Ahead and Look Towards the Future of Storage

Mar 18

Spring is the season for new beginnings so let’s look at how new applications with new requirements and breakthrough storage technologies will bring fresh approaches to the ways we use and store our data.

Black Hole: It Takes Big Data to See The Big Picture

Apr 11

The first-ever image of a black hole was revealed to the world by an international team of researchers from the Event Horizon Telescope (EHT) project in a culmination of years of work, astronomy and computer science feats. How much data must you compute to get an image of something that’s 55 million light-years away, has a mass 6.5 billion times that of our own sun, from a network of 8 telescopes that combine to make a dish the size of the Earth?