3 Things Driving Data in 2024

Feb 2

In 2024, data trends are being driven by our creation, capacity to store, and the challenges of protecting it. Here’s a breakdown of those 3 key areas to watch this year in storage tech and data use.

Machine Learning Makes Massive Black Hole Data Darker

May 3

The first-ever image of a black hole was captured by the Event Horizon Telescope team in 2019. It required a lot of observation data and processing. Researchers have now produced a finer image using even more data from a new source: models generated using machine-learning.

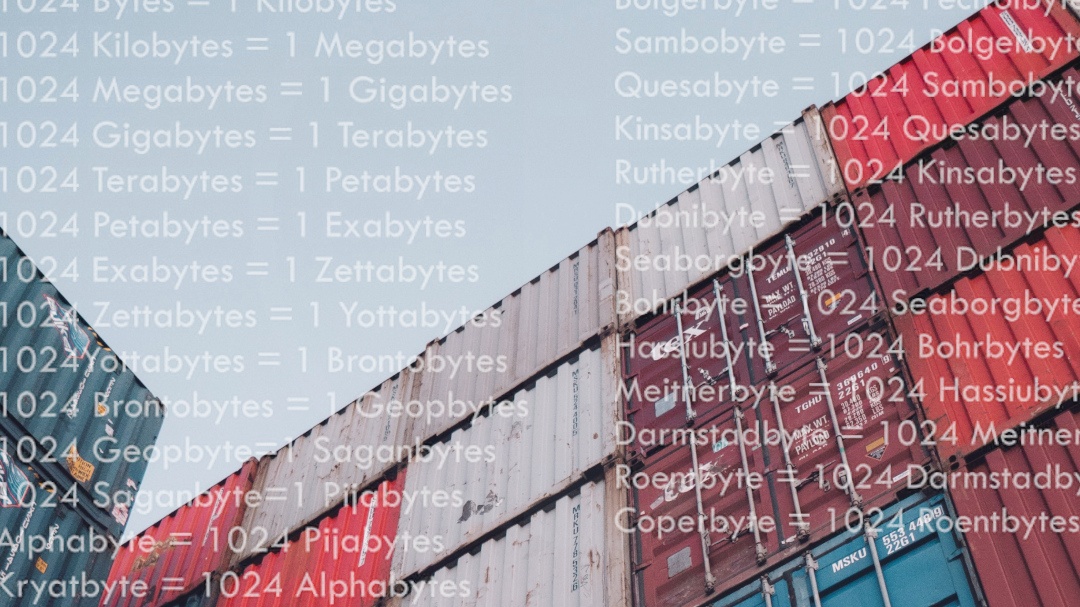

Beyond Bytes: Updated Unit Names Needed for Massive Digital Data

Nov 30

Gigabytes…Terabytes…Petabytes…Zettabytes… Naming units of computer memory follows a standard system of prefixes but after a certain range gets a bit informal. The common and even very large volumes we see in our digital landscape were covered for years. Until now. For the first time in 31 years the International System of Units (SI) has been expanded to include two more prefixes.

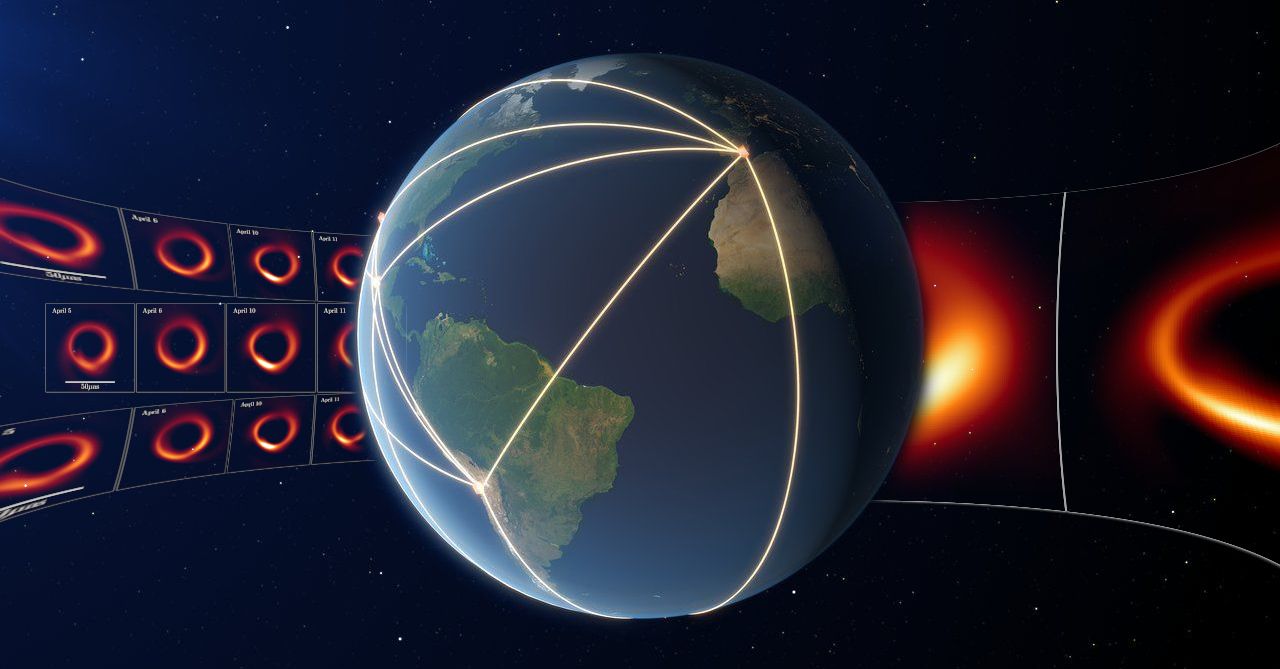

Behind the Big Data: Revealing Our Galaxy's Black Hole

May 18

Astronomers from the Event Horizon Telescope project revealed the first-ever image of the black hole that sits at the center of our galaxy known as Sagittarius A*. It was produced using observation data collected from the network of telescopes situated around the globe. How much data must you compute to get an image of an object that’s 27,000 light-years away, is 4 millions times more massive than our Sun, from a network of telescopes that make a dish the size of the Earth?

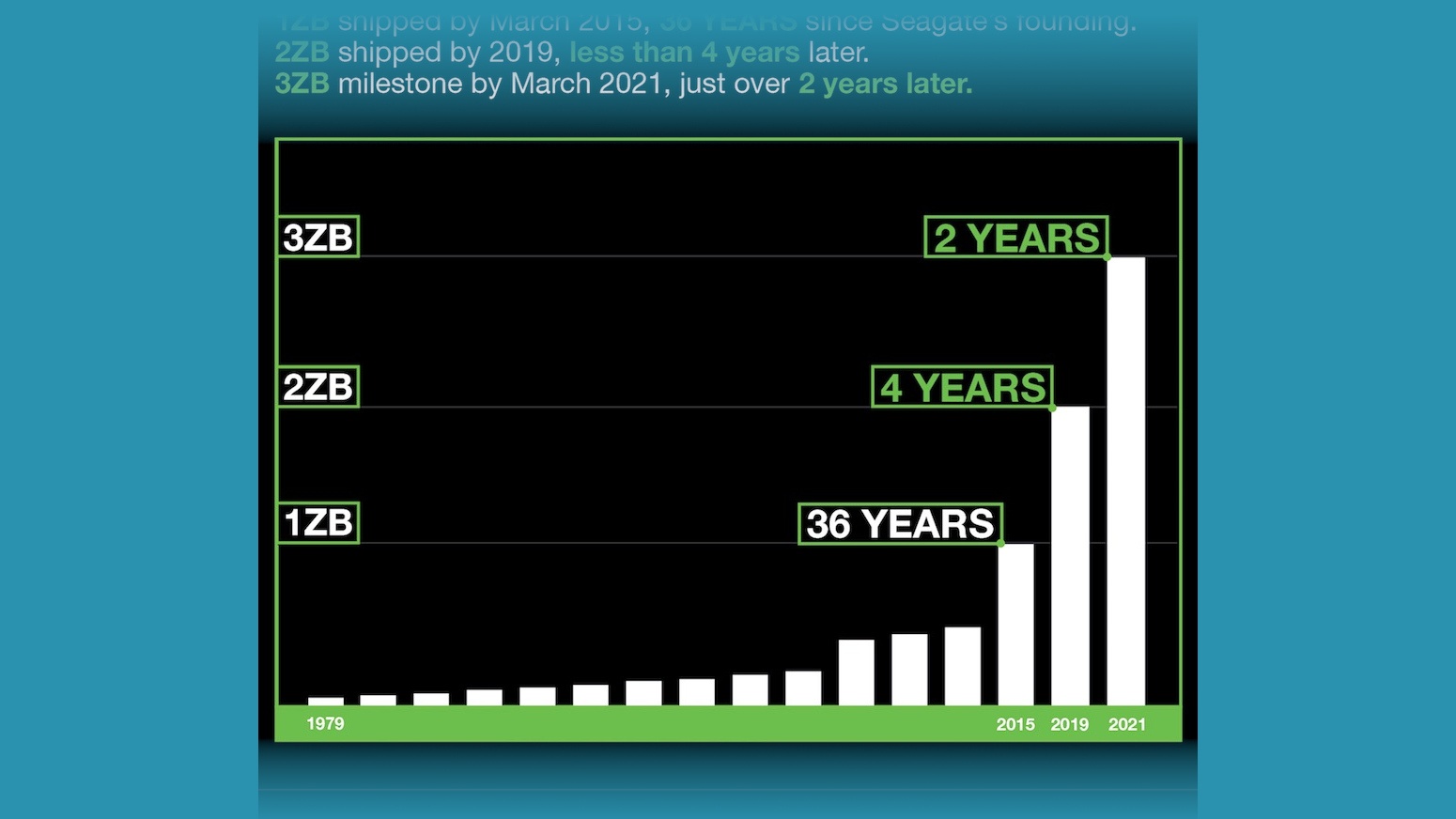

Shipping Capacity: Seagate reports 3 Zettabyte Storage Milestone

Apr 7

As creation and consumption of data continues to grow like never before, the storage giant claimed it has now shipped over three zettabytes of drive storage capacity, highlighting again how the amount of data in the digital world is not only climbing, but accelerating.

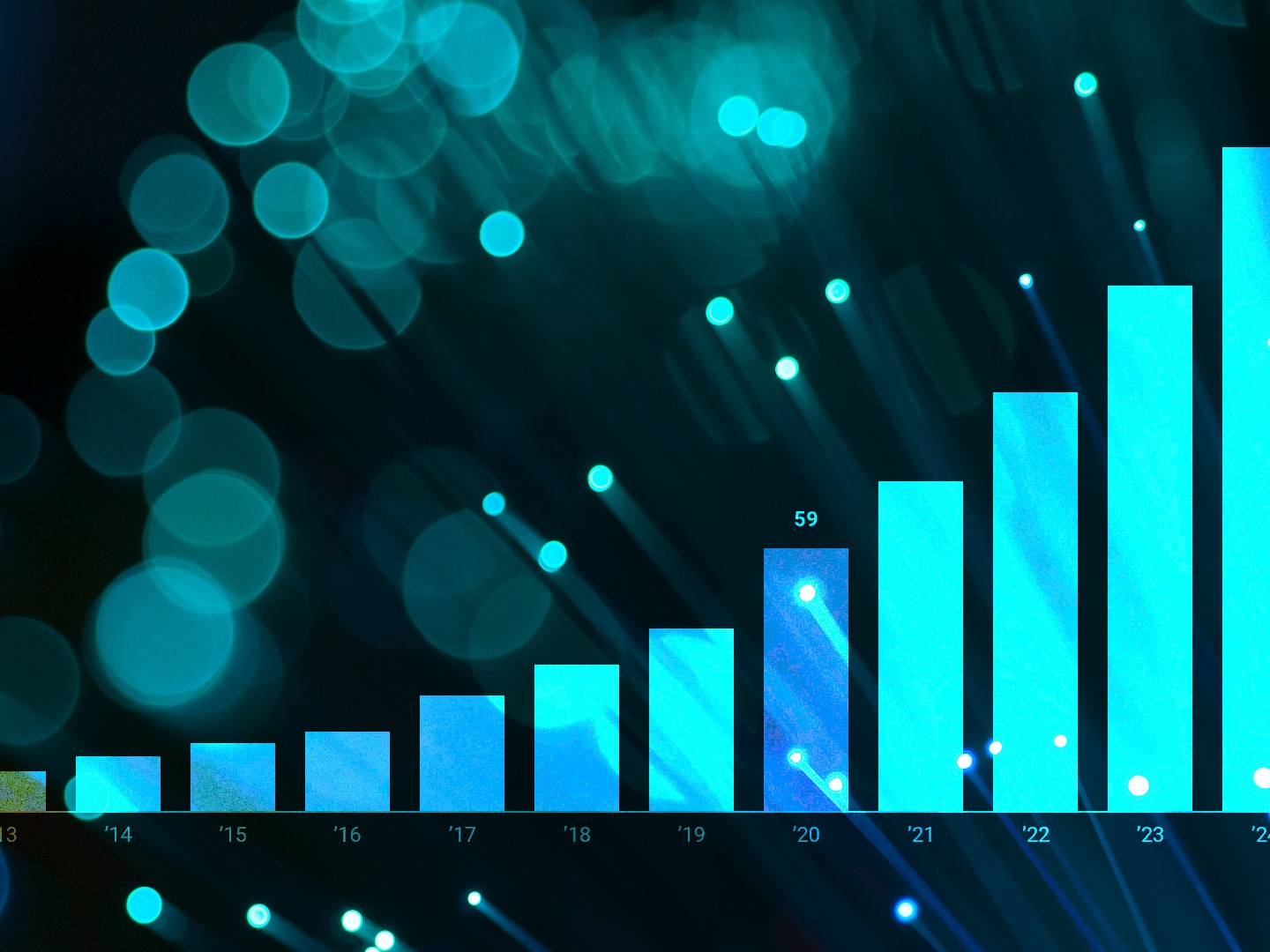

DataSphere Report Factors Pandemic Into Growth Forecast in Latest Update

Sep 9

A new update to IDC’s DataSphere report forecasts continued growth of the world’s created and consumed data with a shifting data landscape impacted by the COVID-19 pandemic. We break down some of the key insights.

Beyond Bytes: Navigating the Names Used to Measure Massive Units of Digital Data

May 20

Gigabytes…Terabytes…Petabytes…Zettabytes… Naming new units of computer memory may be fun but once you venture beyond the volumes we see in our current digital landscape it becomes a bit informal. Keeping track of just how large the numbers are isn’t so easy either. How much big data is there in a Yottabyte?

Black Hole: It Takes Big Data to See The Big Picture

Apr 11

The first-ever image of a black hole was revealed to the world by an international team of researchers from the Event Horizon Telescope (EHT) project in a culmination of years of work, astronomy and computer science feats. How much data must you compute to get an image of something that’s 55 million light-years away, has a mass 6.5 billion times that of our own sun, from a network of 8 telescopes that combine to make a dish the size of the Earth?

Dissecting the Digitization: Data Age 2025

Dec 18

The latest IDC Data Age 2025 whitepaper The Digitization of the World (from Edge to Core) is available now. We’re breaking down some of the key points in the report for you.

Amazon Goes Grocery Shopping, Buys Whole Foods

Jun 23

More and more, businesses are looking for ways to blend brick-and-mortar stores with online services. The company that rules the online marketplace? Amazon. But Bezos is branching out.

Paring Down the Data: Data Age 2025

Apr 24

IDC has released a long white paper, Data Age 2025, on the evolution of data in our lives, how we have moved through different eras of data and computing and highlighting key trends today. We break down the main points, talk about it’s new critical nature, and a bit about pizza toppings.

![Plugged In: The Internet of [Every] Thing Plugged In: The Internet of [Every] Thing](https://www.cbldatarecovery.com/blog/images/107.jpg)

Plugged In: The Internet of [Every] Thing

Jun 22

![Plugged In: The Internet of [Every] Thing Plugged In: The Internet of [Every] Thing](/blog/images/107.jpg)

The Internet of Things (IoT) is the biggest thing since the Internet. And it is, quite literally, huge. Notably, “the Internet is as much a collection of communities as a collection of technologies.” It connects computers and people. Now, the Internet far surpasses its original definition as a vast network of computer systems. It has expanded rapidly and could potentially include everything. In the Internet of Things, all kinds of objects and technologies can effectively “talk” to one another.

Data Disasters: When a Big Data Loss Becomes a Giant Problem

Mar 16

Filing cabinets and safety deposit boxes are old-school. In this technological age, our personal, most valuable information is secured somewhere in the digital universe. It might be saved on an external hard drive or on an internal server. It might live in the Cloud, in an encrypted file, or in a Dropbox folder. Wherever your data is located, there’s no way to be sure it’s 100% safe or secure.

Disaster is inevitable. Sometimes it’s a fender bender or a stolen credit card. Other times, it’s a Big Data disaster.

What is Big Data?

Big Data is a recent ‘hot topic’ in the tech world. It is:

“A term that describes the large volume of data – both structured and unstructured – that inundates a business on a day-to-day basis. But it’s not the amount of data that’s important. It’s what organizations do with the data that matters. Big data can be analyzed for insights that lead to better decisions and strategic business moves.”

The second part of this definition is the critical piece; it’s not the amount of data, but what can be done with the data.